Unmoderated card sorting tools are a great way to reach a large number of geographically dispersed participants for your card sorting study. With mass emails you can reach a large number of potential participants, but the challenge is getting people to participate in the study. Unfortunately, few people actually open and read these emails, and even fewer take the time to participate in the study.

How do you motivate people to participate?

So how do you motivate people to participate in Web-based card sorting or another type of unmoderated, remote user research study? Incentives! But what type of incentive works best for a mass audience? You can give an incentive to each participant, but with a large number of participants that can be very expensive. And if you give each participant a small incentive of say $5.00, how motivating is that?

A common alternative is using a sweepstakes-style drawing. One of the participants out of hundreds will get a gift card or other incentive. But how effective is this sweepstakes-style incentive in increasing the number of participants? Does it affect the quality of the results by encouraging those who are only interested in the incentive and not in completing the study seriously? Can you motivate people to participate in a study without any incentive? What are the other variables that affect participation?

Our study

We sought to answer these questions through a recent card sorting study for an ecommerce client. As part of a website redesign, we were reorganizing the products in their store. Customers of this company pay a membership fee to join and are required to purchase a certain amount of products per month. So they have a closer connection to the company than the average business-consumer relationship.

We wanted to get a minimum of 30 participants, based on a study from Tullis and Wood, which found that the minimum number of participants needed for valid results was 20 to 30. Cluster analysis structures of samples above 30 participants were very similar to those of the full set of participants.

Unlike traditional card sorting, which requires meeting with each person, having them sort physical cards, and then recording that data manually; Web-based card sorting makes it very easy to reach far more than 30 people with very little extra effort on the part of the researcher for each participant. After emailing a large group of representative participants, the researcher can “sit back and relax” while the participants do the study on their own time and the card sorting software records and compiles the results. At the end of the study, the researcher can go into the card sorting software to analyze and manipulate the data which is displayed in a variety of ways.

From previous studies we found that, without a facilitator, it can be difficult to get people to spend 20 minutes thoughtfully working on an Web-based card sorting study. On some projects we have offered incentives and this has seemed to increase the number of participants. On other projects, our clients have refused to offer incentives and we have had fewer participants who were motivated to work on the study “out of the kindness of their hearts”.

So for our recent ecommerce client, we recommended entering the participants in a drawing for an iPod. For the modest cost of about $300, we could possibly get hundreds of participants.

Do incentives draw in the wrong element?

Our client did not object to the cost, but was concerned about the possible negative effect that an incentive might have on the data. They worried that some people might be motivated solely by the desire to get the incentive and might not take the time or effort to complete the study thoughtfully. This is something we never considered previously. Our client felt that their customers would participate in the study out of their strong affiliation and desire to help the company.

Let’s find out with two studies!

We agreed on doing two identical studies with the only difference that one offered an incentive of entry into a drawing for an iPod and one offered no incentive. Our hypothesis was that the incentive would result in many more participants than the non-incentive study, and that there would be no difference in the quality of the data received from both studies. We thought that the participants in the incentive study would complete it with the same degree of seriousness and thoughtfulness as those in the non-incentive study.

Although we, and probably many others, have done Web-based card sorting studies with and without incentives, and observed the difference in the number of participants, we had never done two identical studies to determine the effects of an incentive. In those previous studies, various other factors could have contributed to the difference in the number of participants, including the characteristics of the audience, the type of incentive, the ease or difficulty in completing the study, the credibility of the company with the audience, and the existing communication relationship between the company and the potential participants. Our two identical studies would eliminate those variables to see the true effect of the incentive.

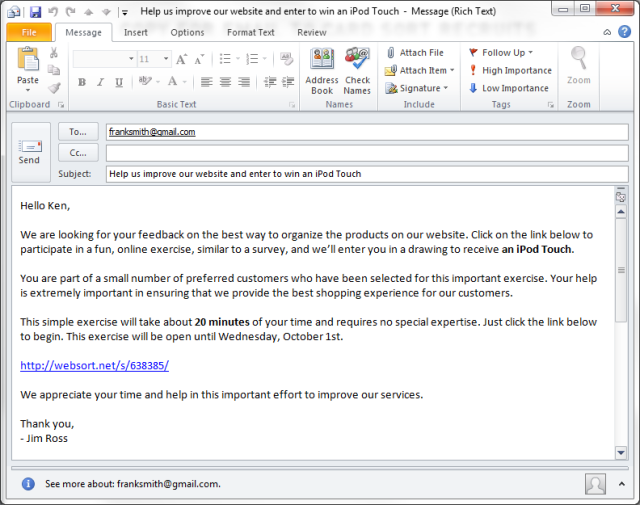

From our client’s customer database, we carefully selected two representative samples of 5,000 customers each. We carefully wrote an email for each study. These emails were nearly identical except the incentive study also highlighted the incentive in the subject line and in the body of the email. Both emails also attempted to stress the importance of receiving customer input into the design of the site. We explained how their help would create a better shopping experience for all customers. And we set the expectation that it would be a fun exercise that should take about 20 minutes.

Both open card sort studies contained the same 60 cards with names of common, household products. The brand names of these products were made generic through an exercise in earlier focus groups. With a limit of 60 cards and the use of everyday, understandable products, we felt that this would not be a difficult study to complete.

The study ran for six days, with emails sent initially on a Thursday, with a reminder email on the following Monday. The study was ended on the next Wednesday morning.

The findings

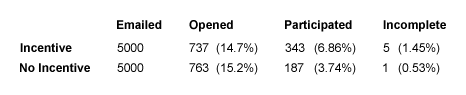

Surprisingly few customers opened the emails regardless of the subject line mentioning an incentive. As shown in the table below, only 14.7% (737) of the 5,000 customers opened the incentive emails, and 15.2% (763) opened the non-incentive emails. Our client regularly sends its customers several emails per month. Many of these emails end up in spam folders and receive little attention.

The incentive was persuasive.

The incentive did significantly increase participation by those who opened the emails. 46.5% of those who opened the incentive email participated in the study, while 24.5% of those who opened the non-incentive email participated in that study. Overall though, very few of the 10,000 people emailed participated in the studies. 343 (6.86%) of the 5,000 completed the incentive study and 187 (3.74%) completed the non-incentive study.

Although the incentive was more persuasive than not offering an incentive, there were more than enough customers willing to help improve the customer experience without any incentive. 187 participants were far more than what was needed. In this case, the commitment of the customers to the company was enough to provide willing and motivated participants.

The incentive didn’t cause people to take the study less seriously.

We did find five participants in the incentive study (1.45%) and one (0.53%) in the non-incentive study who did not complete the study correctly. Some did not sort or name any items and just submitted their results, or they sorted a few items and then submitted their results without sorting the rest. These may have been legitimate errors, they may have been people who just gave up, or they may have been people intentionally trying to enter the study without doing the sorting. The card sorting software allowed us to identify and delete these participants from the results.But did the incentive attract participants who were just interested in winning the iPod and who did not take the study seriously? To determine this we looked very carefully at the category names created by each participant in both studies and noted any oddities. An equal number of participants in both studies did not name any of their categories. We looked at these results and found that most of these people did carefully sort the items into logical categories. These people either did not realize that they needed to name categories, or they could not figure out how to name them. We realized that the card sorting software did not provide a warning to participants that they had not named any categories.

The card sorting results were nearly identical.

The categorization of cards in each study was virtually identical, as one would expect from large samples of an identical audience, according to Tullis and Wood’s study.

With virtually identical results, such a slight difference between the number of people who did not correctly complete the study, and with the fact that nearly all the participants in both studies did carefully complete the sorting, we can conclude that the incentive did not affect the quality of the study data.

Lessons Learned

- An incentive is an effective way to increase the number of participants in a Web-based study with little effect on the quality of the data.

- Incentives for Web-based card sorting are very inexpensive compared to other user research activities which pay each individual participant.

- The type of incentive you offer should be appropriate to the audience. It should be motivating enough for the time commitment involved.

- Sometimes you don’t need to offer an incentive. Appealing to altruism and the benefit to potential participants can motivate them to help out.

- Whether to offer an incentive depends on several factors: the type of participants you are trying to recruit, the relationship the participants have with the company, the existing communication the company already has with the potential participants, and the difficulty and time involved in completing the study.

- Even if you don’t need to offer an incentive to get participation, it is nice to reward people for their efforts and time.

- Even if there are some participants who don’t take the study seriously or if some have errors, careful analysis of the data can find these participants and you can delete their data.

- When you have many participants, the effect of a few who didn’t take it seriously is small.

- Whether you have an incentive or not, it is important to carefully craft the email to get the point of the study across succinctly but understandably. You have a brief time to explain the study and motivate people to participate. Save the study instructions for after the participants click the link and go to the card sorting interface.

- Emails to participants should be sent out from the company they are familiar with. If you are a consulting company working with a client, the emails should come from the client for credibility.

- Some card sorting software allows you to upload your company logo (or your client’s logo). This maintains credibility after participants click the link in the email. They trust that they are actually doing the study for the company that they know.

- You may need to email a large number of people to get enough participants. For example, even if we wanted 50 participants, at our participation rate of 6.86% with an incentive, we would have to have emailed 750 people.

Web-based research methods, such as card sorting, provide the ability to reach a large number of participants, but convincing them to participate is the first hurdle. With these tips, you’ll have more and better participation in your research study.

References

Tullis, Tom and Wood, Larry. “How Many Users are Enough for a Card-Sorting Study?” UPA 2004 Proceedings.