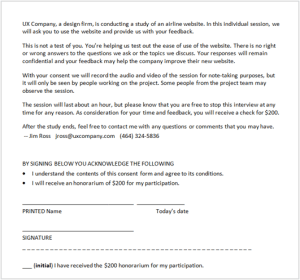

Are consent forms always necessary? We’re told that consent forms are an indispensable part of ethical user research. Consent forms are the vehicle to give and get informed consent – they inform the participants of what the study will entail and they allow the participant to indicate consent – with a signature and date.

Yet consent forms can conflict with the informal, friendly rapport that we try to establish with participants. Anything you present for people to sign immediately looks like a legal document or liability waiver. It puts them on guard.

That’s ironic because consent forms are the opposite of legal waivers. Legal documents are created to protect the interests of the company that creates them, while consent forms are created to protect the rights of the people signing them. Yet most participants assume they are signing a typical legal waiver.

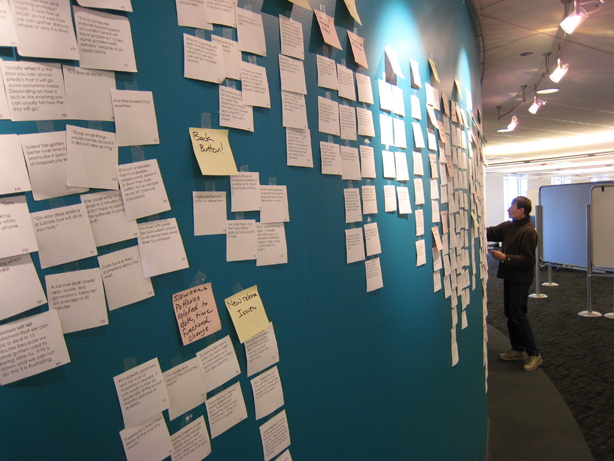

Consent forms seem acceptable in more formal user research situations, such as usability testing and focus groups, but they seem odd and even off-putting when used in more informal situations. I’ve found them to be especially awkward when doing field studies at people’s offices. You strive to set up an informal situation, such as asking someone to show you how they create reports or asking them to try out a new design for an expense report application. But when you show up with a consent form for them to sign, it shatters the informal, comfortable rapport you tried so hard to establish. I’ve had people react to consent forms in this kind of situation with, “Hey! I thought we were just talking here.” How many times in the course of your work-life have you had someone show up to a meeting with a legal document for you to sign?

So I say use your judgment. When a consent form feels like it would be overly formal, don’t use it (unless your legal department requires it). Instead, get informed consent informally by email. “Inform” with your email describing what will take place, and get “consent” from their reply email agreeing to participate. At the start of the session, you can inform them again with a summary of what you’ll be doing. They will then give consent by continuing to participate in the session.

A good guideline is how comfortable or uncomfortable you feel when giving participants the consent form. If you feel uncomfortable, you’re probably breaking a group norm. So you should find a more acceptable way of getting informed consent.