Reduce, Reuse, and Recycle

When you’ve been regularly writing online articles for 13 years, with an average of six articles per year, eventually you find that you occasionally come up with a great idea for an article, but later you realize that you’ve already written an article on that topic. That’s happened to me a few times, most recently with an idea to write an article about getting up to speed on the subject matter involved in a project. I realized that I wrote an article almost ten years ago. October 6, 2011, I published an article “Learning the Subject Matter” on Johnny Holland, a UX magazine that stopped publishing new articles a few years later.

I thought about writing an updated version with what I’ve learned in the last ten years, but it didn’t make sense with that original article still being online. However, I recently took a look at that article, and found to my distress that JohnnyHolland.com is no longer online. Sometime within the last year it went offline.

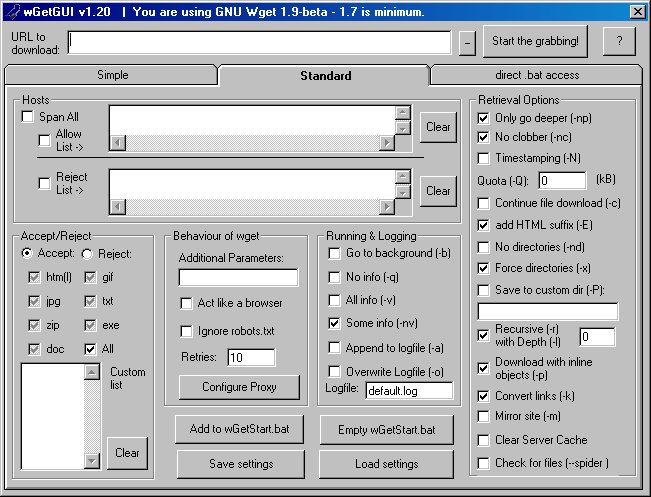

It seemed to be a sign that it was time to finally write that update. Luckily I was able to find my Johnny Holland articles on the Internet Wayback Machine, and I downloaded the text. My original Word documents of those articles had disappeared from a few computers ago. So I recycled the original article and wrote an updated version, revising the original article significantly and adding and removing pieces based on my experiences from the last ten years.

On June 21, 2021, almost 10 years later, I published the updated version on UXmatters, Learning Complex Subject Matter.

Image reduce-reuse-recycle-repeat by Phil Gibbs is licensed under CC BY 2.0